I've noticed a trend among the rationalist movement of favoring long and convoluted articles referencing other long and convoluted articles--the more inaccessible to the general public, the better.

I don't want to contend that there's anything inherently wrong with such articles, I contend precisely the opposite: there's nothing inherently wrong with short and direct articles.

One example of significant simplicity is Einstein's famous E=mc2 paper (Does the inertia of a body depend upon its energy-content?), which is merely three pages long.

Can anyone contend that Einstein's paper is either not significant or not straightforward?

It is also generally understood among writers that it's difficult to explain complex concepts in a simple way. And programmers do favor simpler code, and often transform complex code into simpler versions that achieve the same functionality in a process called code refactoring. Guess what... refactoring takes substantial effort.

The art of compressing complex ideas into succinct phrases is valued by the general population, and proof of that are quotes and memes.

“One should use common words to say uncommon things” ― Arthur Schopenhauer

There is power in simplicity.

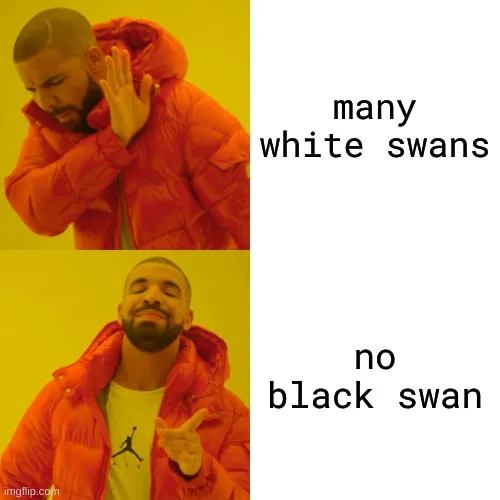

One example of simple ideas with extreme potential is Karl Popper's notion of falsifiability: don't try to prove your beliefs, try to disprove them. That simple principle solves important problems in epistemology, such as the problem of induction and the problem of demarcation. And you don't need to understand all the philosophy behind this notion, only that many white swans don't prove the proposition that all swans are white, but a single black swan does disprove it. So it's more profitable to look for black swans.

And we can use simple concepts to defend the power of simplicity.

We can use falsifiability to explain that many simple ideas being unconsequential doesn't prove the claim that all simple ideas are inconsequential, but a single consequential idea that is simple does disprove it.

Therefore I've proved that simple notions can be important.

Jump in the discussion.

No email address required.

Notes -

There is a failure mode that happens for code refactoring. I hope you agree that some people out there, maybe not you, can fail at things like refactoring.

Refactoring is often easy for code related things, because in the end there is a simple test for whether a refactoring has gone well: just run the code again and see if the results are the same.

Imagine if you were coding, but everyone had a different OS, a different browser, and different versions of the code libraries you were using. You can be certain that your code refactoring is safe for your machine at this given moment. But you don't know with certainty that it is also going to run on everyone else's machine.

My point has always been that writing is not so straightforward, and it is closer to the hellish existence where everyone has their own unique OS, browser, and code libraries.

The whole point of refactoring code is that it is doing the same thing in the end. My whole point about writing is that we rarely understand what the hell it is doing in the first place, much less what happens when we change it. Why did reading Scott's stuff convince me so easily, but when I shared it with friends it didn't change their minds at all? Why do you find Scott's writings too long, while I find the length just fine? It is because our minds are different.

I am not trying to argue the impossible case that no writing can ever be simplified. I would just say that the simplification is a lossy and imperfect form of data compression. And that what is being lost is probably not apparent to you, because you are probably stripping the things that your mind didn't need in the first place, but that others might have needed. If I or others seem "offended" that you claim to be able to write lossless compression of data, then think of it as the same "offense" that physicists feel towards people that claim to have invented perpetual motion machines.

Yes I do, because I follow good programming practices.

I can give you examples where I refactored code and I added unit tests to make sure that any and all changes I did retained exactly the same functionality the original code had. If it worked in someone's machine before, it should work in that machine afterwards.

This is not theoretical, I've done these refactoring, and the result works in millions of machines just fine. I can show you the commits.

Only if you don't follow good programming practices.

If you follow simple logically-independent steps, the process cannot fail.

But you can make a guess, and that guess can be right. That's what writing is.

No, not necessarily. Maybe 99.9% of the writers would lose something important in the simplification most of the time, but not all.

So you accept you consider it impossible.

You are not getting me with the whole programming metaphor. If you'd stop thinking that I was questioning your chops as a programmer for two seconds you'd maybe understand.

You have not committed a single piece of code in the theoretical world I made up where everyone has a unique OS. You have committed code in our world that just has basically three OS's you need to worry about.

In both worlds its not just about you following good coding practices or good writing practices. Its about the people writing the OSes also following good practices. In the theoretical world where there are a billion different OSes and they are nearly all written by amateurs, it doesn't matter how careful you are with your code, because its gonna be run on top of someone else's shitty code.

Does your program still work if the CPU doesn't know how to add 1+1? Or if the library running your code just randomly decides its gonna do garbage collection in its own special snowflake way and deletes a bunch of variables you need?

Your current programming ability relies on the fact that the computers it runs on are relatively stable and consistent.

Humans are not stable and consistent. Thus writing for them is not going to be the same as writing for a computer.

I shouldn't have written this last message. I'm done with this conversation. If you were correct about simple writing being effective then one of us should have convinced the other person in the first one or two exchanges.

Wrong. The code I've committed works on OS's you've never heard of.

You keep making assumptions regardless of how many times I've told you the reality.

That world is irrelevant.

No, it doesn't.

But they are real, not hypothetical. The idea-space of human readers is finite.

Only if the convincee was amenable to actually being convinced, and you already conceded you consider the proposition impossible, so there's no way anyone could have convinced you otherwise.

More options

Context Copy link

More options

Context Copy link

More options

Context Copy link

More options

Context Copy link